PokerML

Updated Nov 2025

PythonFastAPIphevaluatorCFR+

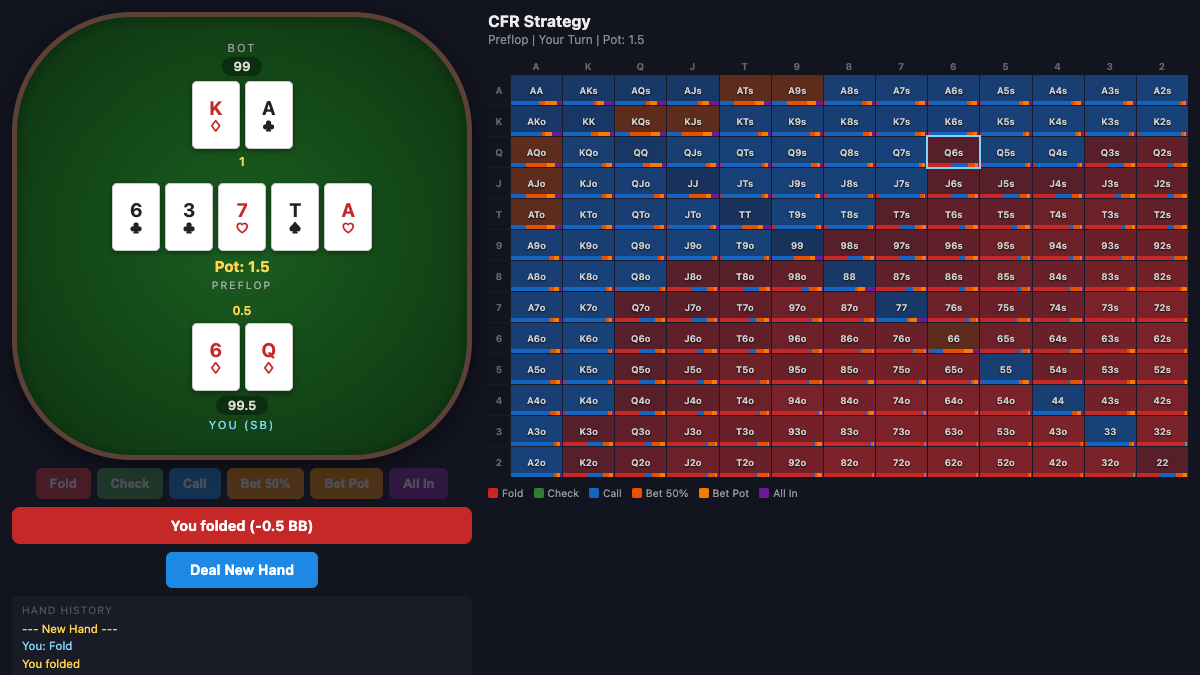

PokerML is a heads-up no-limit hold'em solver built on CFR+ (Counterfactual Regret Minimization with regret clamping) and external sampling Monte Carlo. It trains over 100,000 iterations with k-means preflop bucketing and percentile-based postflop abstraction, then serves the converged strategy through a FastAPI web app where you can play against the bot and inspect its 13x13 range grid in real time.

CFR+ with external sampling: 100K iterations, regret clamping, bucket-cached traversal across all 4 streets

K-means preflop abstraction (10 buckets) + empirical CDF postflop bucketing with sampled board completions

FastAPI web UI: play against the bot with a live 13x13 range heatmap showing action probabilities per hand

What I built

- CFR+ trainer with external sampling MCCFR, immutable game states, and per-iteration bucket caching for speed

- Two-level card abstraction: k-means on preflop equity (169 hands to 10 buckets) and percentile CDF on postflop hand strength

- Action abstraction with 50% and 100% pot bet sizings, fold/check/call, all-in, and a 4-raise-per-street cap

- FastAPI server with session management, bot inference, and a 13x13 range grid API for the frontend

How it works

- 1Deal hands and board, pre-cache card buckets for both players across all 4 streets

- 2Traverse the game tree: enumerate all actions for the traversing player, sample one for the opponent

- 3Update cumulative regrets (clamped to zero for CFR+) and accumulate strategy sums

- 4After 100K iterations, compress the averaged strategy to JSON and serve it via FastAPI for live play

Results

- ✓Converged strategy across ~1,173 lines of Python with 18.5 MB compressed strategy file

- ✓Interactive web UI lets you play full hands and inspect the bot's action distribution for any of 169 canonical hands

Next steps

- Add variance reduction techniques (pruning, warm starting)

- Expand action abstraction with more bet sizings and raise depths